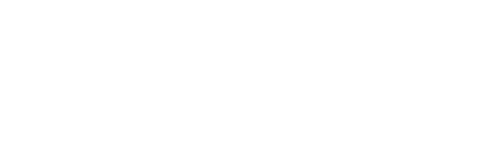

At EnergyHub, I led the end-to-end redesign of the ChargingRewards app to reduce opt-outs and rebuild user trust. EV drivers were confused by the app’s logic, lacked visibility into rewards, and didn’t feel in control—resulting in churn that threatened both product ROI and grid stability. Working with a lean cross-functional team, I drove UX research, strategy, design, and testing. Our solution focused on transparency, motivation, and behavioral confidence—ultimately reducing opt-outs by 46% and earning a 4.7/5 trust rating at beta.

TL;DR

Introduction

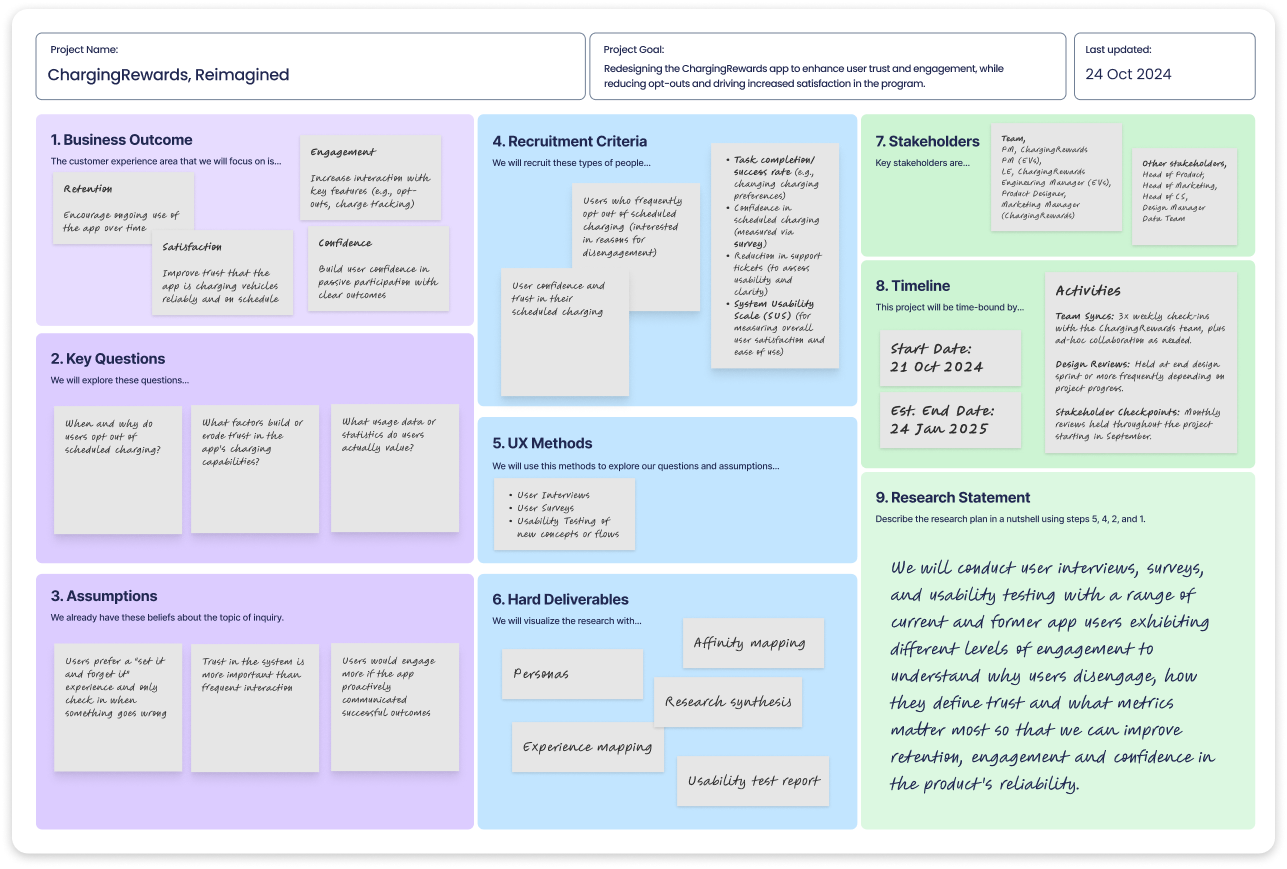

EVs are reshaping the grid—but when everyone plugs in after work, utilities face dangerous demand spikes. ChargingRewards was designed to fix this by incentivizing smart, delayed charging. But users didn’t understand it, didn’t trust it, and were opting out in high numbers.

I led the end-to-end redesign as the sole designer on a lean team. I owned research, UX, strategy, and delivery, rebuilding trust and reducing opt-outs under tight constraints.

This wasn’t just a UX issue—it was a behavioral confidence problem with real impact. Every opt-out strained grid reliability. Our goal: rebuild trust fast, with limited resources and high stakes.

Discovery & Research

Strategy & Ideation

Execution

What seemed like a usability issue was actually a trust problem.

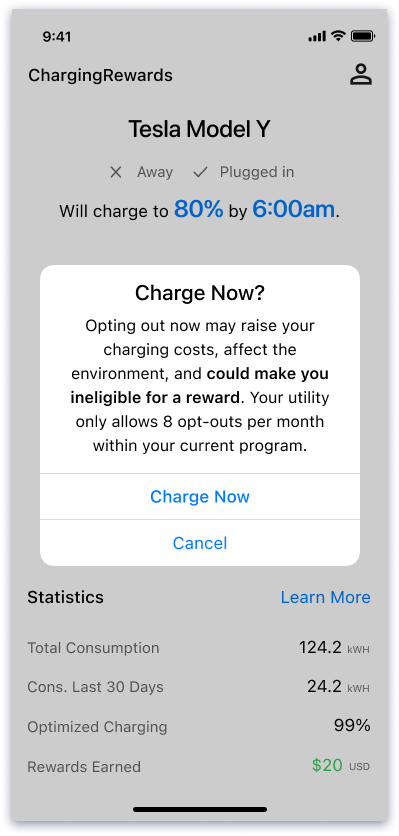

Users weren’t just confused by the interface—they didn’t fully understand how managed charging worked, and they didn’t trust a system making decisions behind the scenes. This lack of confidence led many to opt out, threatening both the user experience and the program’s ROI.

So I reframed the challenge:

- How might we build trust and make the invisible benefits of managed charging feel real and worth staying opted in for?

This shift—from usability to behavioral design—became the foundation for every decision moving forward.

Designing for Trust: From Quick Wins to Foundational Redesign

Redesigning the core experience

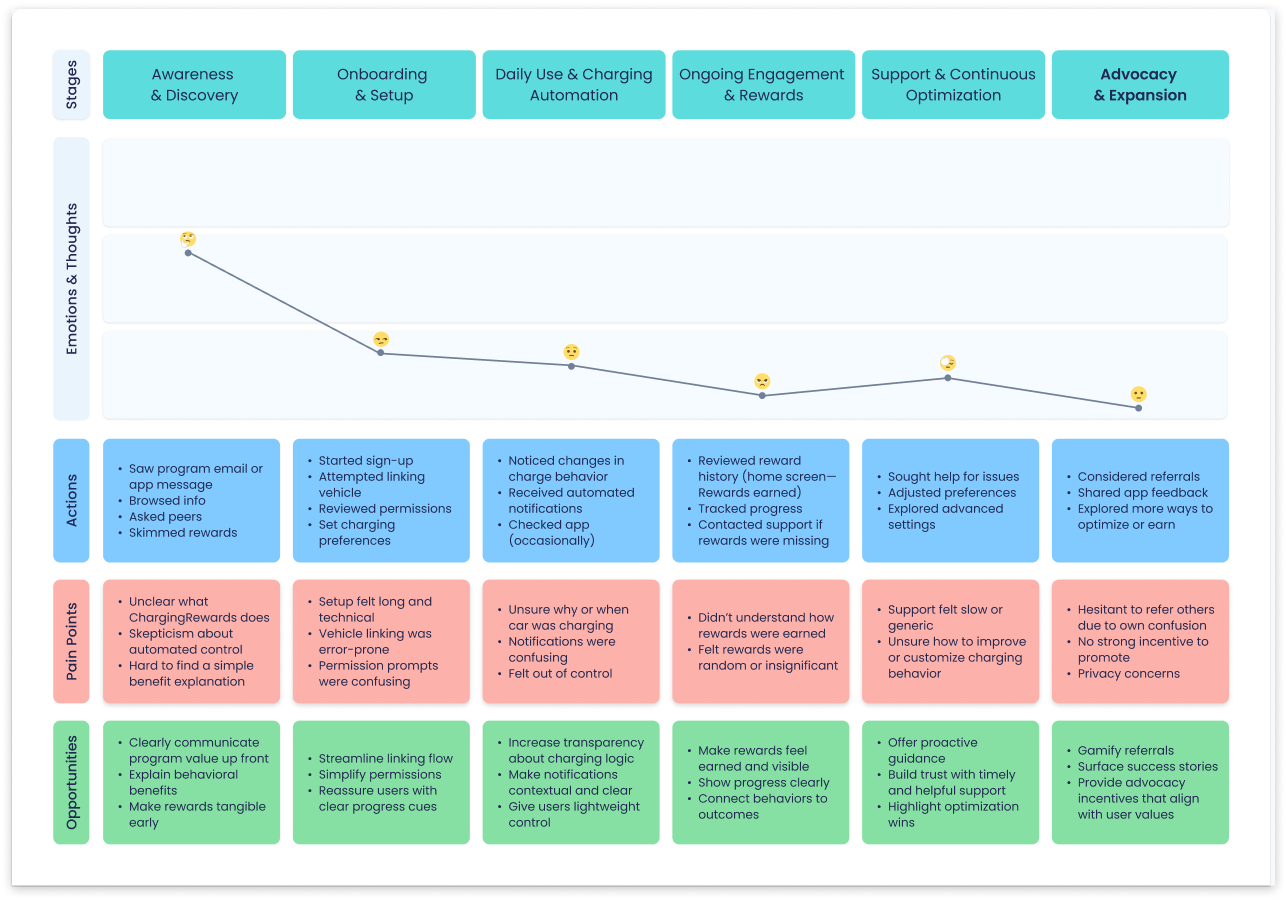

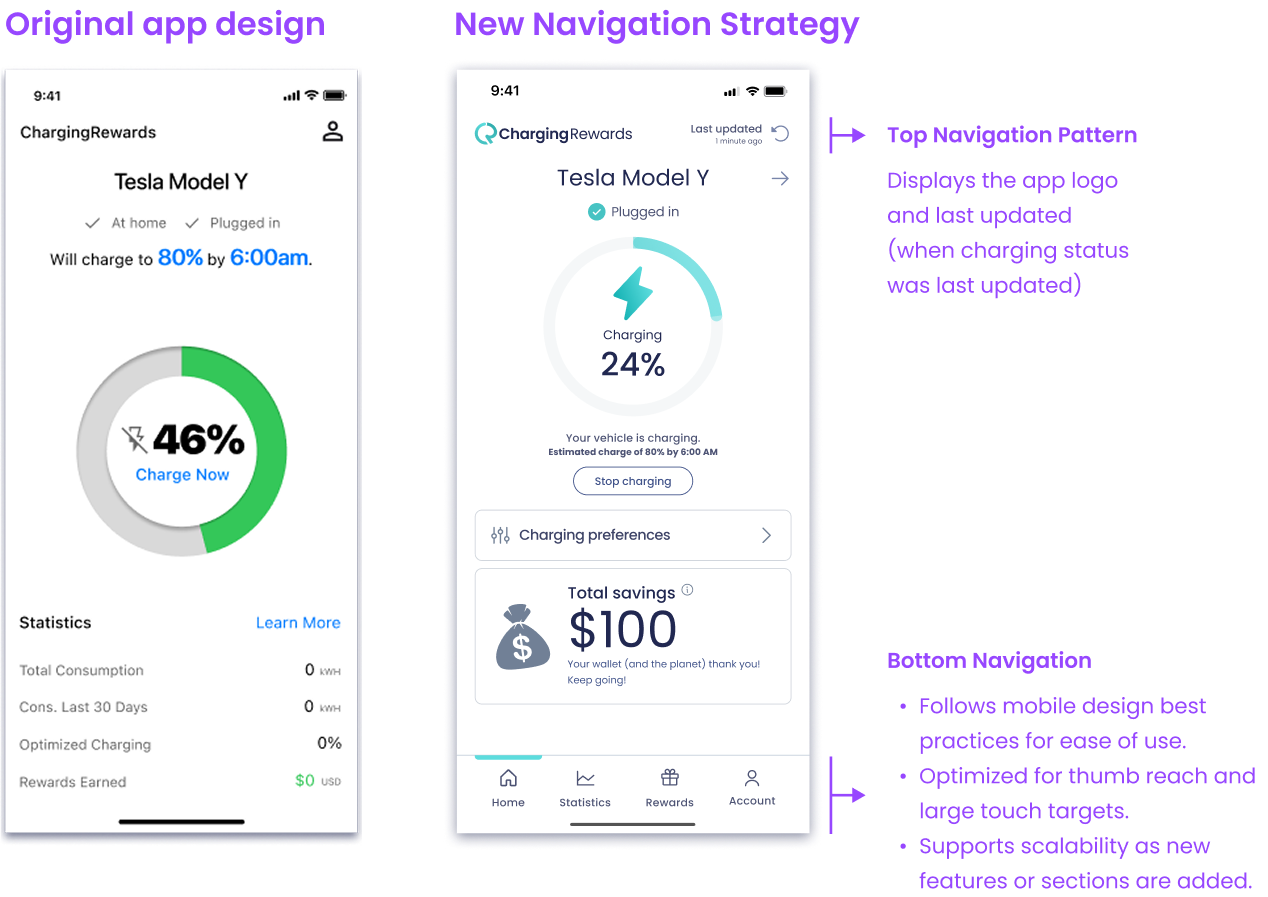

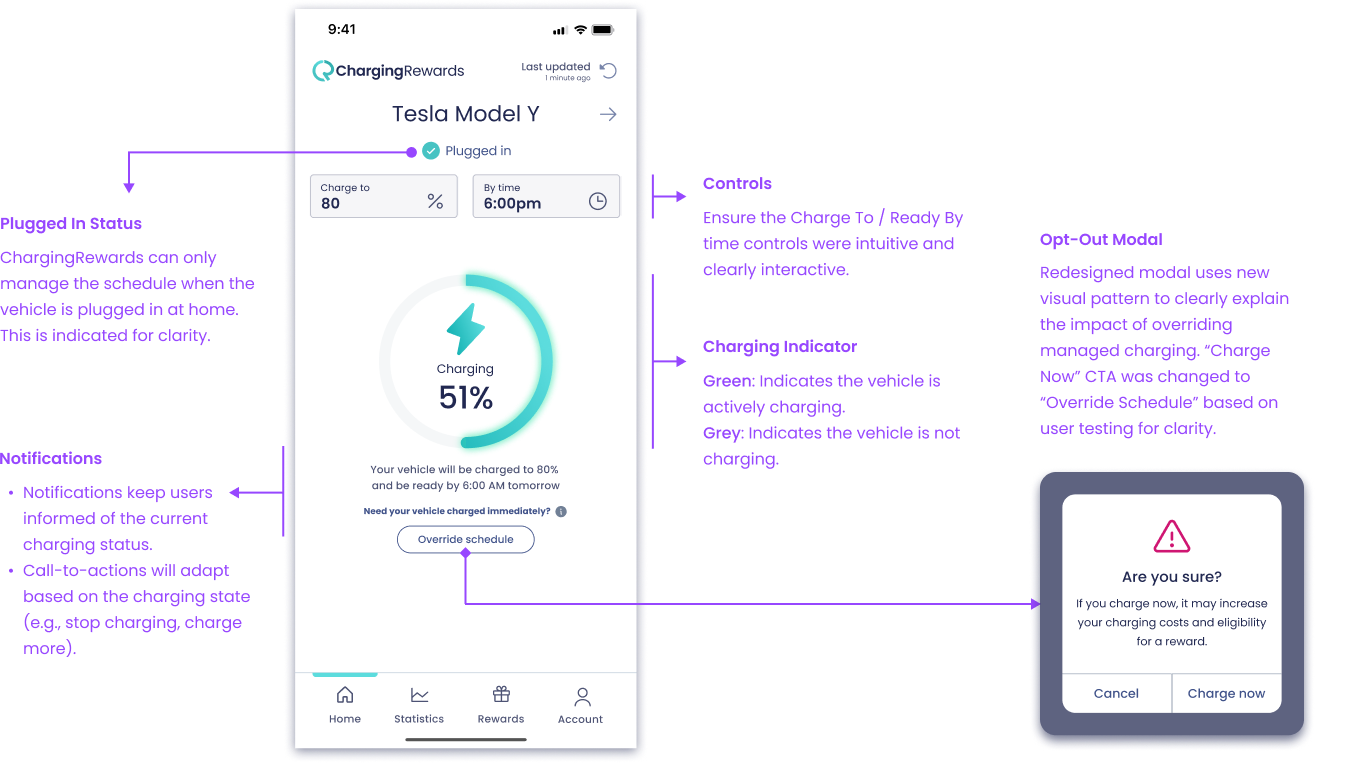

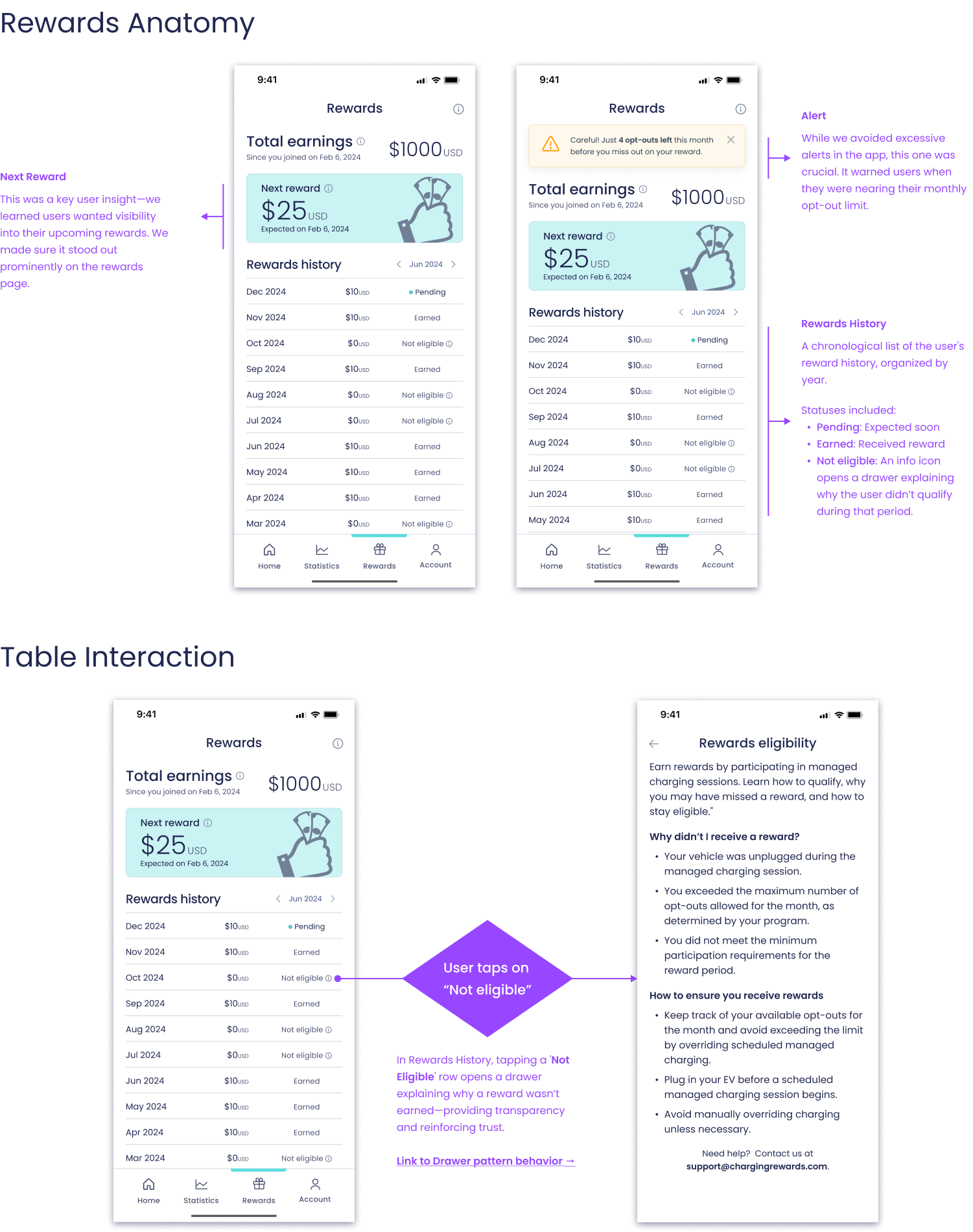

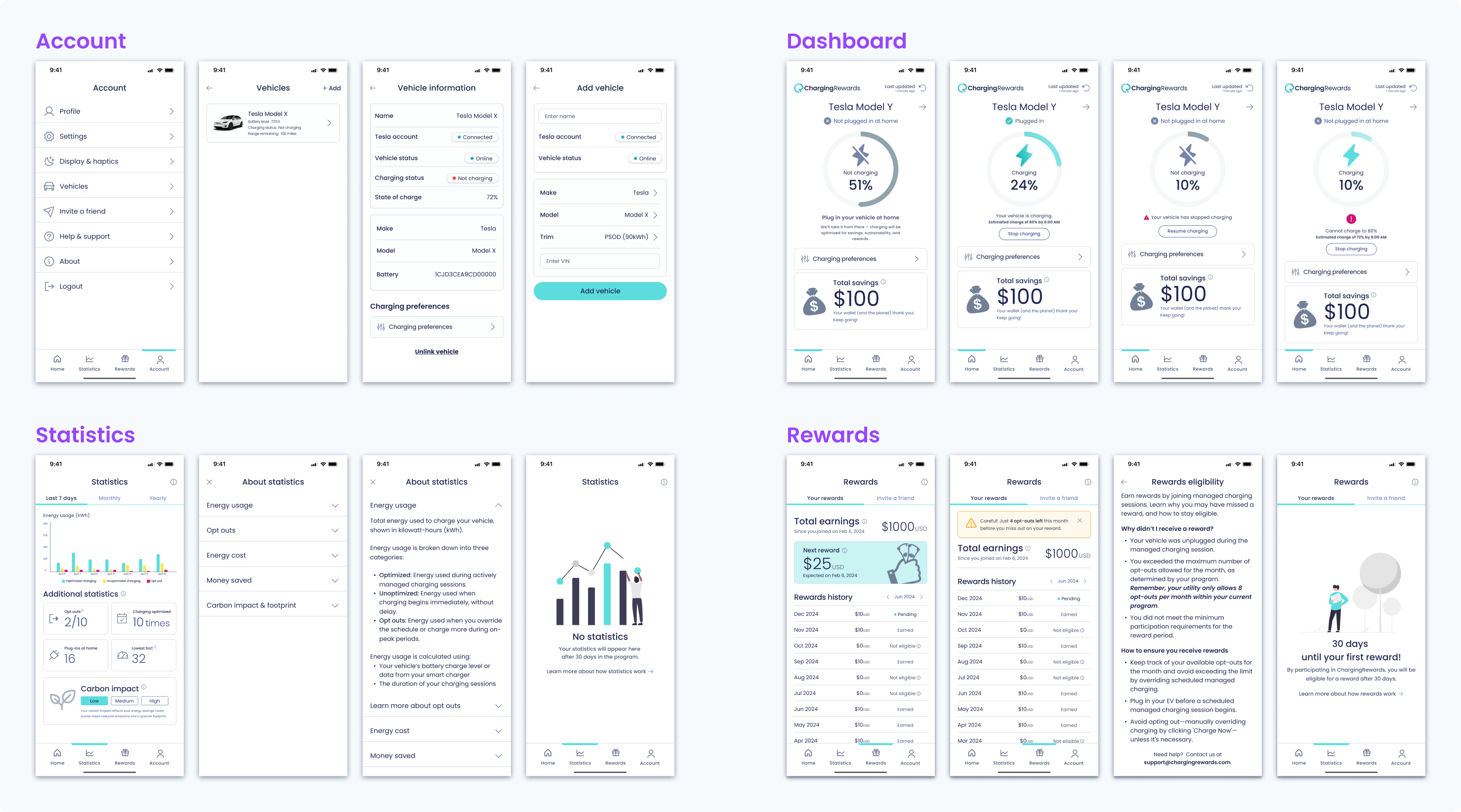

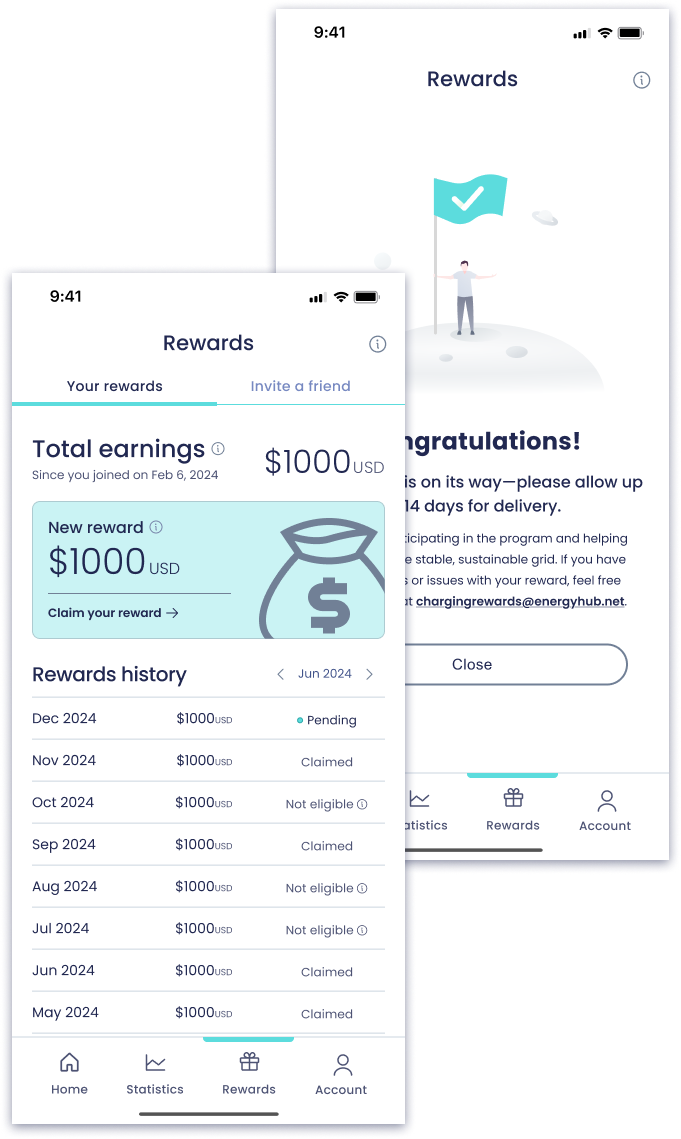

With the most immediate trust gaps addressed, I shifted focus to redesigning the core product—anchoring it around four key behavioral pillars. The new Dashboard provided clarity on charging state and status. Schedule Control empowered users to adjust their charging preferences safely. Statistics combined energy usage trends, carbon impact, and historical charging behavior to reinforce confidence through transparent, user-prioritized data. And Rewards made benefits tangible, trackable, and motivating.

Design reviews and usability testing sessions helped validate decisions and ensure the work stayed grounded in real user needs..

Designing for Impact

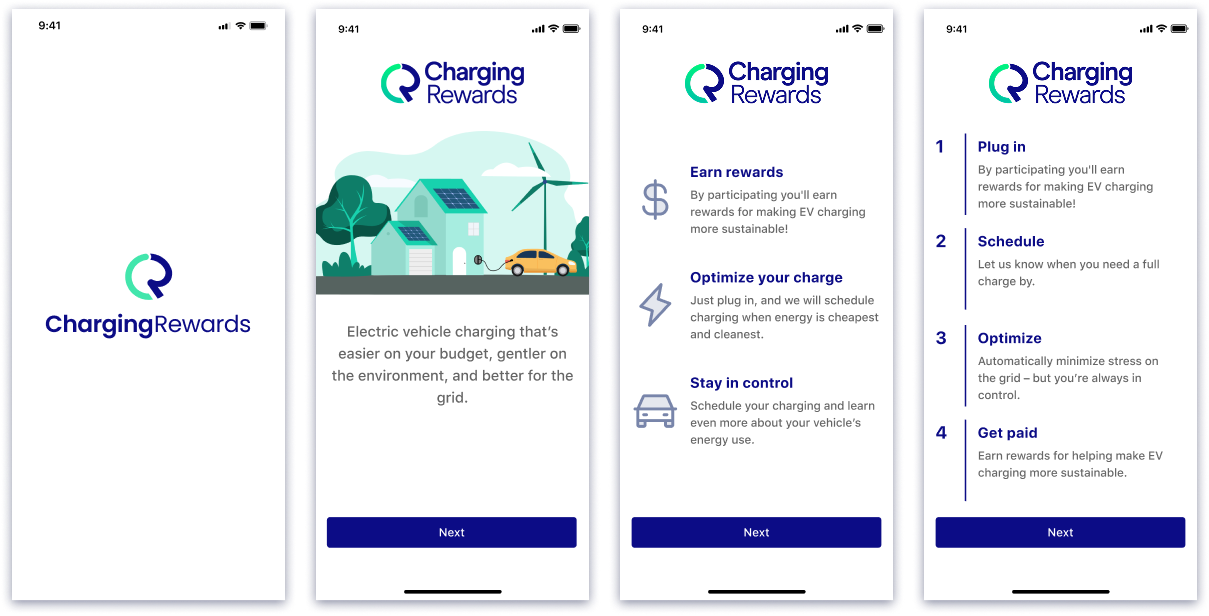

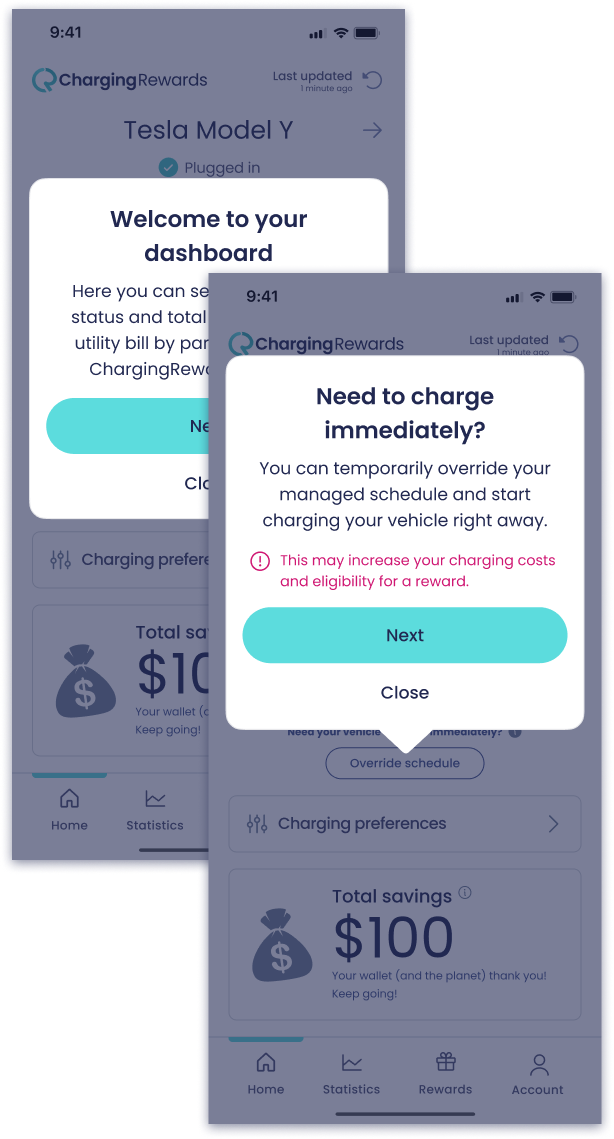

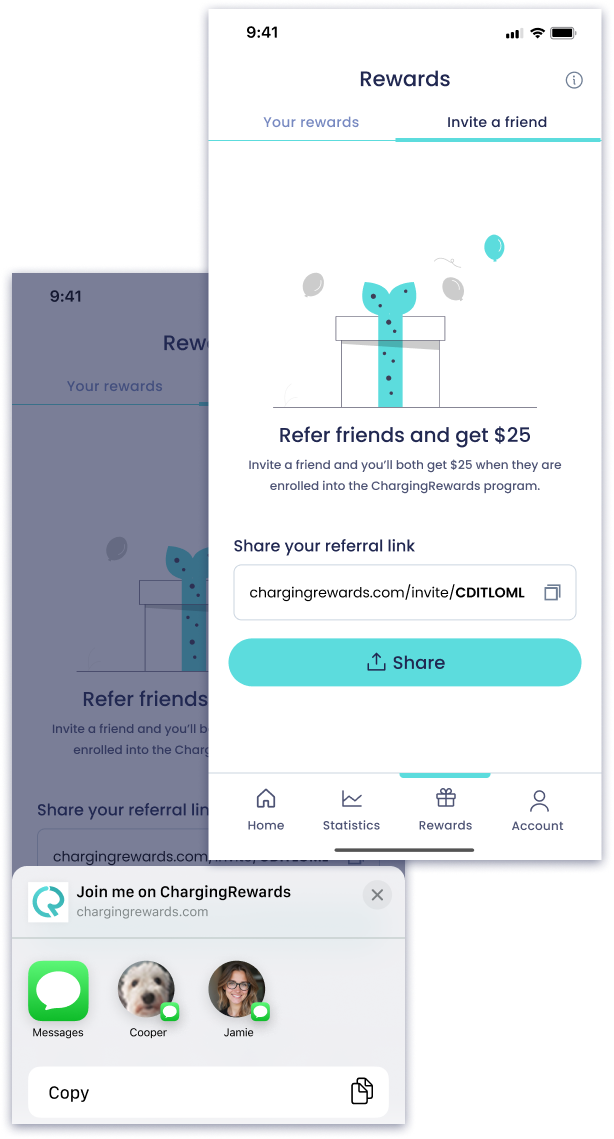

Beyond core screens, I looked for ways to improve the product experience holistically—boosting engagement, reducing confusion, and adding long-term value. These additions weren’t part of the original scope, but I pushed to deliver them because they solved real user problems and aligned with business goals.

Testing, Iterations & Delivery

Testing

To move quickly, we ran parallel research: moderated sessions via UserZoom and unmoderated flows in Maze.

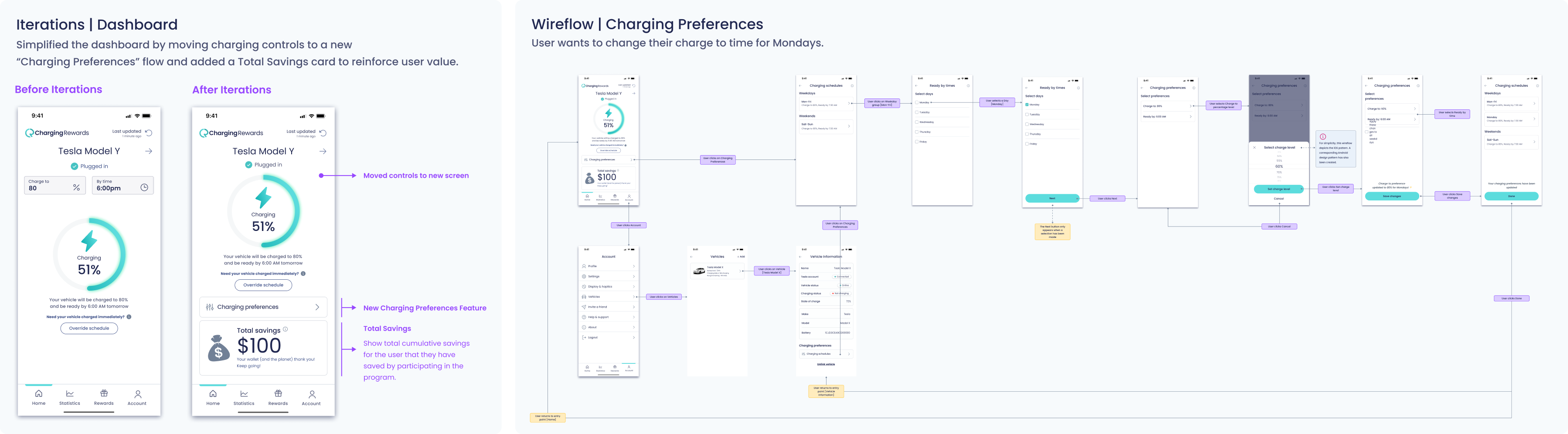

One key insight: users rarely adjusted “Charge To” or “Ready By” parameters—they valued flexibility, but without friction. I moved these into a dedicated Preferences flow, simplifying the dashboard and aligning with user mental models.

Iterations

I refined the Dashboard to include a “Total Savings” card—giving users a clear sense of financial value earned.

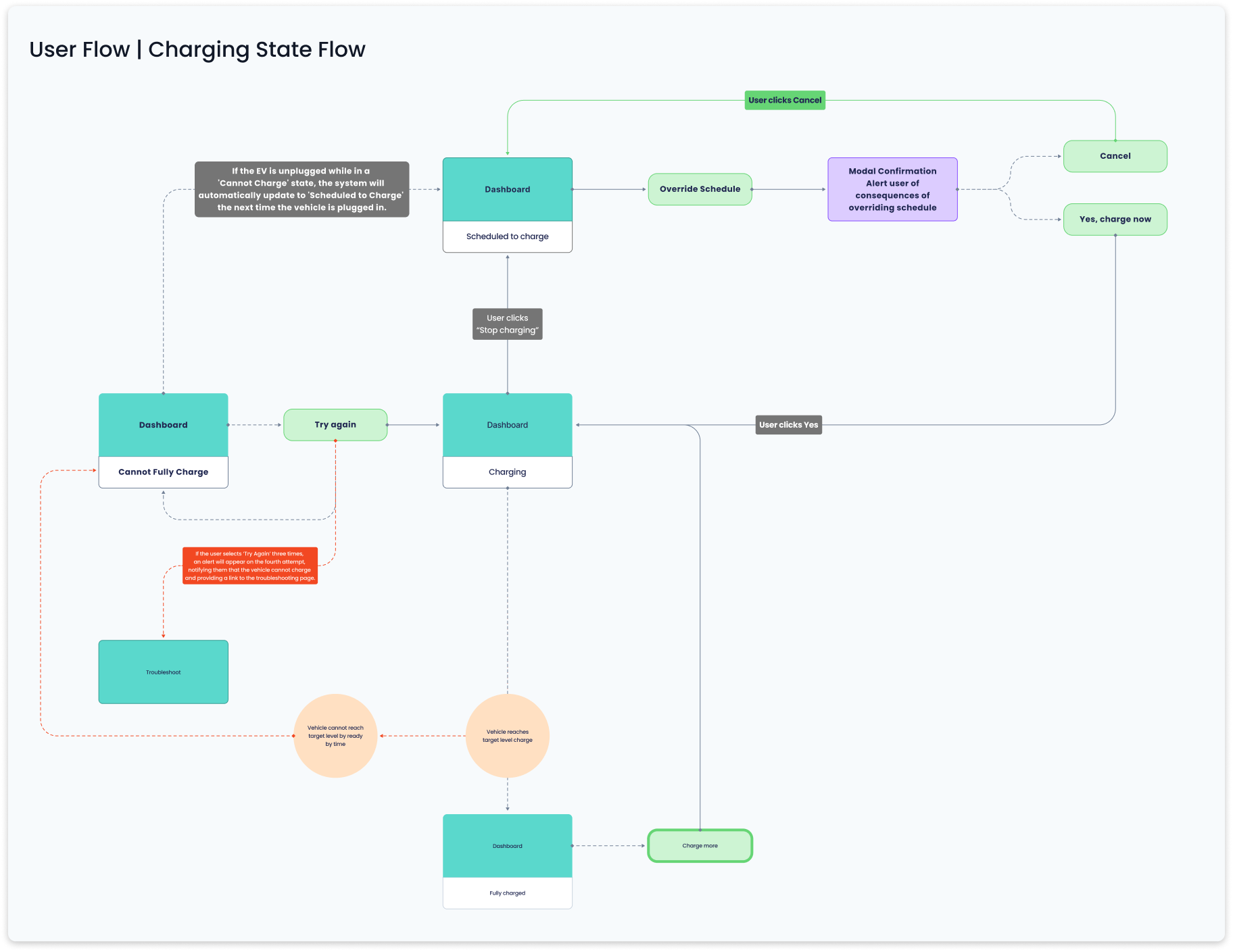

Also redesigned Charging Preferences to be more focused, flexible, and easier to maintain. Throughout, I supported engineering with annotated wireframes and system-behavior wireflows, reducing ambiguity and speeding up handoff.

Delivery

These represent just a subset of the final designs delivered across the product. I designed for every core screen, edge case, and system state—ensuring consistency, clarity, and scalability across the experience.

What’s shown here includes key states for Account, Dashboard, Statistics, and Rewards—as well as patterns like drawers, empty states, and dynamic charging feedback.

Outcome

Conclusion

ChargingRewards was a masterclass in solving complex, real-world problems with scrappy, research-driven design. By aligning business needs with user trust and motivation, we created a scalable product that supports critical infrastructure and rewards drivers. This project sharpened my leadership in fast-paced, resource-constrained environments, deepened my strategic design thinking, and underscored the power of user trust in driving product success.